A new lawsuit accuses the country of inflaming the war

Electronic Frontier Foundation and Nohemi Gonzalez, the Digital Rights Group, argues that Section 230 violates the First Amendment and protects the freedom of information in the Internet

“The free and open internet as we know it couldn’t exist without Section 230,” the Electronic Frontier Foundation, a digital rights group, has written. Users can’t be sued for forwarding email, hosting online reviews, or sharing photos or videos that other people find objectionable according to court rulings on Section 230. It also helps to quickly resolve lawsuits cases that have no legal basis.”

Nohemi Gonzalez was killed in a restaurant during the Paris terrorist attacks, which targeted the Bataclan concert hall, and her family brought the case. The family’s lawyers argued that YouTube, a subsidiary of Google, had used algorithms to push Islamic State videos to interested viewers, using the information that the company had collected about them.

“Videos that users viewed on YouTube were the central manner in which ISIS enlisted support and recruits from areas outside the portions of Syria and Iraq which it controlled,” lawyers for the family argued in their petition seeking Supreme Court review.

By safeguarding platforms’ freedom to moderate content as they see fit, Democrats have said, Section 230 has allowed websites to escape accountability for hosting hate speech and misinformation that others have recognized as objectionable but that social media companies can’t or won’t remove themselves.

Section 230 was passed in 1996 and does not mention personalization or algorithmic targeting. The history shows that the law was intended to promote technologies to show, filter, and prioritize user content. The law would need to be changed if Section 230 protection for targeted content or personalized technology is eliminated.

The free speech clause of the First Amendment is feeling the strain due to the internet. The modern internet touches virtually every aspect of our lives, and at some level, virtually every part of the internet is speech. Even more than many other large industries, its lack of geographical boundaries has eroded the barriers between local governments: a law regulating websites in Florida might dictate how businesses behave in California or Europe, while conversely, a web business that runs afoul of one country’s laws can quickly hide abroad. A human-run legal system struggles to keep up with the large scale of automated digital connections.

Rather than seriously grappling with technology’s effects on democracy, many lawmakers and courts have channeled a cultural backlash against “Big Tech” into a series of glib sound bites and political warfare. Striking a balance between left and right on internet regulation is more difficult than you might think. Some of the people that are most vocal about defending the First Amendment are the ones that are most willing to part with it.

It is a way for lawmakers to quietly put their thumb on the scale, and impose government speech regulations if they attempt to repeal 230.

Scale of Defamation in Social Media: The Effects of a New Law on Internet-Based Content Reproduction and Editing

No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.

The law was passed in 1996, and courts have interpreted it expansively since then. It effectively means web services and newspapers cannot be sued for hosting or reposting illegal speech. The law was passed after a pair of seemingly contradictory defamation cases, but it’s been found to cover everything from harassment to gun sales. Courts can dismiss most lawsuits over web platform moderation, since there is a second clause that protects the removal of objectionable content.

But judging from other social-media platforms with loose restrictions on speech, a rise in extremism and misinformation could be bad business for a platform with mainstream appeal such as Twitter, says Piazza. “Those communities degenerate to the point to where they’re not really usable — they’re flooded by bots, pornography, objectionable material,” says Piazza. “People will gravitate to other platforms.”

What Happens When the First Amendment Gets Around Its First Amendment? Removing Section 230 Does Not Make Meooravish Science Enough

But making false claims about pandemic science isn’t necessarily illegal, so repealing Section 230 wouldn’t suddenly make companies remove misinformation. The First Amendment protects shaky scientific claims, and there is a good reason. Think about how much our understanding of covid changed over the course of time, and how many people are getting sued for publishing good-faith assumptions that were later proven incorrect.

Removing Section 230 protections is a sneaky way for politicians to get around the First Amendment. Without 230, the cost of operating a social media site in the United States would skyrocket due to litigation. Sites could face lawsuits over ambiguous legal content if they are not able to invoke a straightforward 230 defense. Even if they won court, web platforms would still be incentivized to remove posts that were illegal, such as unfavorable restaurant reviews and MeToo allegations. All of this would burn a lot of money and time. Platform operators do everything in their power to keep 230 alive. Politicians complain and the platforms respond.

Source: https://www.theverge.com/23435358/first-amendment-free-speech-midterm-elections-courts-hypocrisy

The Story of Amber Shear: Social Media, Twitter and the First Amendment: What Happened to Amber Heard? The Case of Martin Luther

It’s also not clear whether it matters. During the procedure, Jones declared corporate bankruptcy, leaving Sandy Hook families without a way to chase his money. He treated the court proceedings contemptuously and used them to hawk dubious health supplements to his followers. Legal fees and damages have almost certainly hurt his finances, but the legal system has conspicuously failed to meaningfully change his behavior. If anything, it provided yet another platform for him to declare himself a martyr.

Johnny Depp had filed a lawsuit against Heard, who had publicly stated she was a victim of abuse, and that he was the one who was behind it. Amber Heard’s case was less cut-and-dried than Jones’, but she lacked Jones’ shamelessness or social media acumen. The case turned into a ritual humiliation of Heard because of the incentives of social media and the courts failing to respond to the way that things like livestreams contributed to the media circus. The worst offenders are already beyond shame andDefamation claims can meaningfully hurt people who have to maintain a reputation.

After this point, I have mostly addressed bipartisan and Democratic reform proposals because they have a shred of substance to them.

Republican-proposed speech reforms are not good. We’ve learned just how bad over the past year, after Republican legislatures in Texas and Florida passed bills effectively banning social media moderation because Facebook and Twitter were using it to ban some posts from conservative politicians, among countless other pieces of content.

The First Amendment should probably allow these bans to be unconstitutional. They are government speech regulations! But while an appeals court blocked Florida’s law, Texas’ Fourth Circuit Court of Appeals threw a wrench in the works with a bizarre surprise decision to uphold its law without explaining its reasoning. Months later, that court actually published its opinion, which legal commentator Ken White called “the most angrily incoherent First Amendment decision I think I’ve ever read.”

In a blog post last week, Twitter said it had not changed its policies but that its approach to enforcement would rely heavily on de-amplification of violative tweets, something that Twitter already did, according to both the company’s previous statements and Weiss’ Friday tweets. “Freedom of speech,” the blog post stated, “not freedom of reach.”

The Digital Divide: The Power of Software to Rule the Internet for the Good, the Bad, the Gender: Texas, Florida, and Beyond

Two other conservatives, including Thomas, did not vote to put the law on hold. (Liberal Justice Elena Kagan did, too, but some have interpreted her vote as a protest against the “shadow docket” where the ruling happened.)

Only an idiot would support the laws in Texas and Florida. The rules are transparently rigged to punish political targets at the expense of basic consistency. They attack “Big Tech” platforms for their power, conveniently ignoring the near-monopolies of other companies like internet service providers, who control the chokepoints letting anyone access those platforms. There is no saving a movement so intellectually bankrupt that it exempted media juggernaut Disney from speech laws because of its spending power in Florida, then subsequently proposed blowing up the entire copyright system to punish the company for stepping out of line.

And even as they rant about tech platform censorship, many of the same politicians are trying to effectively ban children from finding media that acknowledges the existence of trans, gay, or gender-nonconforming people. On top of getting books pulled from schools and libraries, Republican state delegate in Virginia dug up a rarely used obscenity law to stop Barnes & Noble from selling the graphic memoir Gender Queer and the young adult novel A Court of Mist and Fury — a suit that, in a victory for a functional American court system, was thrown out earlier this year. A panic over grooming affects other Americans as well. Texas is trying to prevent violent insurrectionists from using Facebook, but it is also trying to prevent the distribution of a film that has been screened at the Venice Film Festival.

But once again, there’s a real and meaningful tradeoff here: if you take the First Amendment at its broadest possible reading, virtually all software code is speech, leaving software-based services impossible to regulate. Airbnb and Amazon have both used Section 230 to defend against claims of providing faulty physical goods and services, an approach that hasn’t always worked but that remains open for companies whose core services have little to do with speech, just software.

There is an oversimplification of Balk’s Law. Internet platforms change us, they encourage specific kinds of posts and subjects. But still, the internet is humanity at scale, crammed into spaces owned by a few powerful companies. Humans at scale can be very ugly. It is possible that vicious abuse will come from one person, or it may be spread out into a campaign of threats, lies, or terrorism involving thousands of different people.

A threat was looming when Musk promised that the bird would be freed last week.

To Ndahinda, however, it is clear that the normalization of hate speech and conspiracy theories on social media could have contributed to violence in the Democratic Republic of Congo, even if academics have not yet been able to delineate its contribution clearly. It’s difficult to figure out a casual link from a computer screen to violence. “But we have many actors making public incitements to commit crime, and then later those crimes are committed.”

Currently, Twitter uses a combination of automated and human curation to moderate the discussions on its platform, sometimes tagging questionable material with links to more credible information sources, and at other times banning a user for repeatedly violating its policies on harmful or offensive speech.

Musk promised in a SEC filing that he would transform the platform to serve a functioning democracy when he first announced his plans to take over. But his actions since have shown that he is helping kill off free speech and democracy in the name of saving it.

These platforms are the most likely place for false narratives to start. When those narratives creep onto mainstream platforms such as Twitter or Facebook, they explode. “They get pushed on Twitter and go out of control because everybody sees them and journalists cover them,” he says.

“When you have people that have some sort of public stature on social media using inflammatory speech — particularly speech that dehumanizes people — that’s where I get really scared,” says James Piazza, who studies terrorism at Pennsylvania State University in University Park. You can have more violence in that situation.

According to Rebekah Tromble, a political scientist at George Washington University in Washington DC, Musk’s free speech rhetoric could be problematic because of European Union regulations. The EU’s Digital Services Act, due to go into effect in 2024, will require social-media companies to mitigate risks caused by illegal content or disinformation. According to Tromble, it would be difficult in practice for platforms to create separate policies for Europe. When it is fundamental systems that are introducing those risks, mitigation measures impact the system as a whole.

The era of Musk at Twitter will start with chaos as users test the boundaries. Then, she says, it is likely to settle down into a system much like the Twitter of old.

It is possible for users to determine if the company limits how many other users can view their posts with an option planned by Mr. Musk. In doing so, Musk is effectively seizing on an issue that has been a rallying cry among some conservatives who claim the social network has suppressed or “shadowbanned” their content.

“Twitter is working on a software update that will show your true account status, so you know clearly if you’ve been shadowbanned, the reason why and how to appeal,” Musk tweeted on Thursday. He did not provide additional details or a timetable.

His announcement came amid a new release of internal Twitter documents on Thursday, sanctioned and cheered by Musk, that once again placed a spotlight on the practice of limiting the reach of certain, potentially harmful content — a common practice in the industry that Musk himself has seemingly both endorsed and criticized.

The second set of the so-calledTwitter Files focused on how the company has restricted the reach of certain accounts and methods of propagation that it deems potentially damaging, such as limiting their ability to be found in the search or trending sections of the platform.

Weiss said that the actions were not known to the users. In some cases, suspension for account that break its rules may correspond with a strike that will limit certain content. Users in the case of strikes get notification of their accounts being temporarily suspended.

Feasibility and Democracy of Facebook: The Case of Meareg Amare, the Target of the November 3, 2021, Ethiopian Student Shooting

In both cases, the internal documents appear to have been provided directly to the journalists by Musk’s team. Musk on Friday shared Weiss’ thread in a tweet and added, “The Twitter Files, Part Duex!!” along with two popcorn emojis.

Weiss offered several examples of moderation actions taken on the accounts of right-leaning figures, but it wasn’t clear if such actions were taken against left-leaning or other accounts.

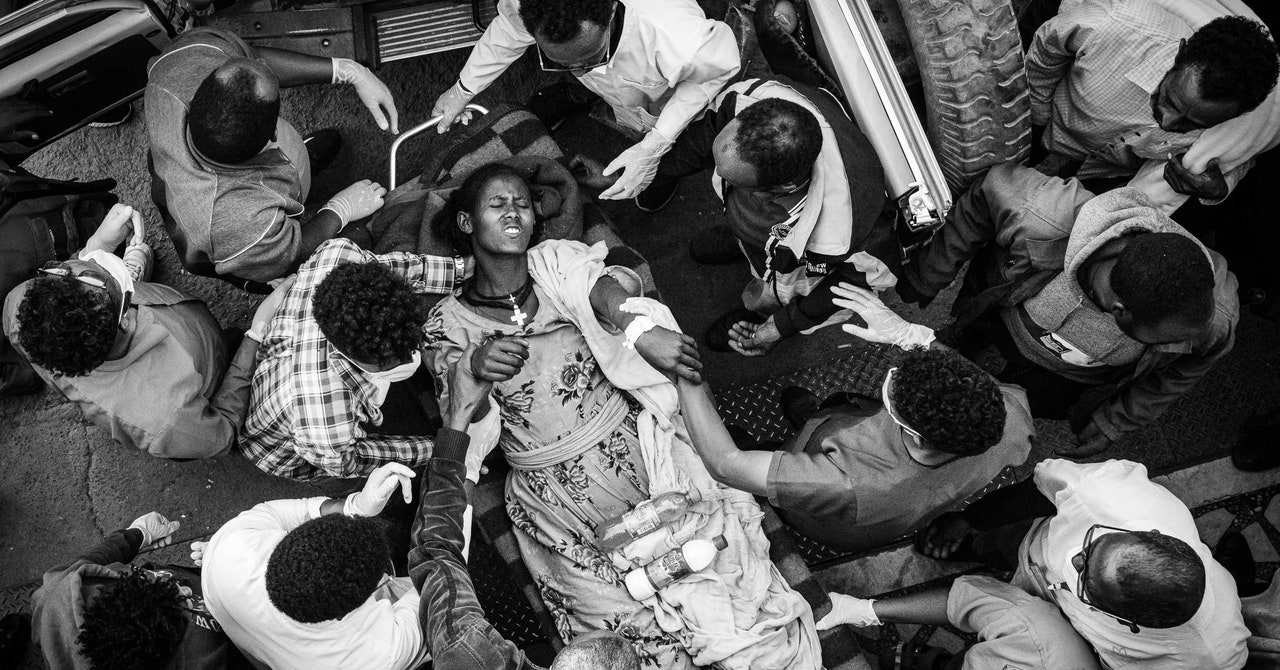

On November 3, 2021, Meareg Amare, a professor of chemistry at Bahir Dar University in Ethiopia, was gunned down outside his home. Amare was the target of a number of Facebook posts in the month prior, which accused him of using the funds to buy property and purchase equipment from the university. People in the comments called for his death. Abrham Amare tried to get the posts taken down on Facebook but was turned down for weeks. Eight days after his father’s murder, Abrham received a response from Facebook: One of the posts targeting his father, shared by a page with more than 50,000 followers, had been removed.

Today, Abrham, as well as fellow researchers and Amnesty International legal adviser Fisseha Tekle, filed a lawsuit against Meta in Kenya, alleging that the company has allowed hate speech to run rampant on the platform, causing widespread violence. The platform has to add moderation staff and deprioritise the content it distributes in order to protect its users.

They can’t be allowed to favor profit at the expense of our communities. The director of a UK nonprofit that deals with human rights abuses by global technology companies says that Facebook has fanned the flames of war in Ethiopia. The organization supports the petition. The company has clear tools available, such as adjusting their software to demote hate, hiring more staff, and making sure their workers are well paid, to prevent that from continuing.

Twitter Crimes, Eritrea, and the Rise of the #StopToxicTwitter Coalition: Implications for the Future of Social Media

The war in Ethiopia has been going on for more than a decade. Prime Minister Abiy Ahmed responded to attacks on federal military bases by sending troops into Tigray, a region in the country’s north that borders neighboring Eritrea. An April report released by Amnesty International and Human Rights Watch found substantial evidence of crimes against humanity and a campaign of ethnic cleansing against ethnic Tigrayans by Ethiopian government forces.

The Supreme Court has scheduled arguments for two major internet moderation cases in February of 2023. Hearings for both the Gonzalez and the Taamneh cases are set for February 21st and February 22nd, respectively.

Editor’s Note: Nora Benavidez is the senior counsel and director of digital justice and civil rights at Free Press, a media and technology justice advocacy organization. Free Press has been associated with the #StopToxicTwitter coalition. The opinions expressed in this commentary are her own. View more opinion on CNN.

So when Musk asked his followers Sunday night in an unscientific poll whether he should step down as the head of Twitter, it likely wasn’t surprising to many that more than 57% of respondents answered “yes” – though that probably wasn’t the result Musk expected from a site that’s home to some of his most ardent fans.

Free Press agrees that Musk needs to step aside. But his replacement as CEO needs to be someone who understands at the most basic level that this social media platform will succeed only when it puts the health and safety of its users before the whims of one erratic and reckless billionaire.

The Role of the FCC in Protecting the Freedom of Information from Terrorist Attacks: Comments on the U.S. Supreme Court Action against Section 230

His amnesty to previously suspended accounts has given us the return of neo-Nazis like Andrew Anglin, right-wing activists like Laura Loomer and other figures who have spread hate to millions of followers.

With that said, the potential new leadership needs to reverse its decision to allow Covid-19 misinformation to spread across the network. The blue checkmark feature, which allows verified users to post longer videos and have their content prioritized at the top of replies, mentions and searches, needs to be retired. Musk had a plan to abolish accounts that had been suspended before he took over.

Tech companies involved in the litigation have cited the 27-year-old statute as part of an argument for why they shouldn’t have to face lawsuits alleging they gave knowing, substantial assistance to terrorist acts by hosting or algorithmically recommending terrorist content.

The law’s central provision holds that websites (and their users) cannot be treated legally as the publishers or speakers of other people’s content. In plain english, that means that the person or entity who created the piece of content is responsible for its distribution, not the platforms where it is shared or re-posted.

The executive order faced a number of legal and procedural problems, not least of which was the fact that the FCC is not part of the judicial branch; that it does not regulate social media or content moderation decisions; and that it is an independent agency that, by law, does not take direction from the White House.

The result is a bipartisan hatred for Section 230, even if the two parties cannot agree on why Section 230 is flawed or what policies might appropriately take its place.

The US Supreme Court has an opportunity to change the law this term due to the deadlock, and they have thrown their support behind the idea.

The Supreme Court’s Review of Section 230: Recommending Content to Users or Supporting Acts of International Terrorism

Tech critics are looking for legal exposure and accountability. “The massive social media industry has grown up largely shielded from the courts and the normal development of a body of law. The Anti-Defamation League wrote a brief for the Supreme Court saying it is irregular for a global industry to be protected from judicial inquiry.

For the tech giants and many of their competitors it would be a bad thing, because it would undermine what has allowed internet to flourish. It would potentially put many websites and users into unwitting and abrupt legal jeopardy, they say, and it would dramatically change how some websites operate in order to avoid liability.

Recommendations are the very thing that make the site a vibrant place, according to the company. Users determine which posts gain prominence and which fade into obscurity by upvoting and downvoting content.

People would stop using Reddit, and moderators would stop volunteering, the brief argued, under a legal regime that “carries a serious risk of being sued for ‘recommending’ a defamatory or otherwise tortious post that was created by someone else.”

The outcome of the oral arguments, scheduled for Tuesday and Wednesday, could determine whether tech platforms and social media companies can be sued for recommending content to their users or for supporting acts of international terrorism by hosting terrorist content. It marks the Court’s first-ever review of a hot-button federal law that largely protects websites from lawsuits over user-generated content.

It is an allegation that seeks to carve out the recommendations so that they don’t get the protections of Section 230.

The case has been commented on by the Biden administration. Section 230 of the Copyright Act protects Google and YouTube from lawsuits for failing to remove third-party content, according to a brief filed in December. But, the government’s brief argued, those protections do not extend to Google’s algorithms because they represent the company’s own speech, not that of others.

In any case the company cannot be held liable for the content on its platform because it is not considered to be assistance to the terrorist group. The Biden administration, in its brief, has agreed with that view.

The Texas and Florida Disciplinary Defendants of a Second State Proposal for a High Court Scale Administrative Action

A number of petitions are currently pending asking the Court to review the Texas law and a similar law passed by Florida. The Court last month delayed a decision on whether to hear those cases, asking instead for the Biden administration to submit its views.